Airflow docker requirements.txt7/23/2023

Let's use a Django application with a PostgreSQL database running in a separate container. Note that you cannot install any Python packages into Docker-based project interpreters. For example, if you're on macOS, select Docker for Mac. In the Settings dialog ( Ctrl+Alt+S), select Build, Execution, Deployment | Docker, and select Docker for under Connect to Docker daemon with. If the plugin is not activated, enable it on the Plugins page of the IDE settings Ctrl+Alt+S as described in Install plugins. The plugin is bundled with P圜harm and is activated by default. Note that you might want to repeat this tutorial on different platforms then use Docker installations for macOS and Linux (Ubuntu, other distributions-related instructions are available as well).īefore you start working with Docker, make sure that the Docker plugin is enabled. You can install Docker on the various platforms, but here we'll use the Windows installation. Once you have successfully configured an interpreter using Docker, you can go offline.ĭocker is installed. You have stable Internet connection, so that P圜harm can download and run busybox:latest (the latest version of the BusyBox Docker Official Image). Make sure that the following prerequisites are met: Here are a few commands that will trigger a few task instances.Configure an interpreter using Docker Compose Prerequisites You can read more in Production Deployment. You to get up and running quickly and take a tour of the UI and theĪs you grow and deploy Airflow to production, you will also want to move awayįrom the standalone command we use here to running the components Out of the box, Airflow uses a SQLite database, which you should outgrowįairly quickly since no parallelization is possible using this databaseīackend. In $AIRFLOW_HOME/airflow-webserver.pid or in /run/airflow/webserver.pid The PID file for the webserver will be stored You can inspect the file either in $AIRFLOW_HOME/airflow.cfg, or through the UI in You can override defaults using environment variables, see Configuration Reference. Upon running these commands, Airflow will create the $AIRFLOW_HOME folderĪnd create the “airflow.cfg” file with defaults that will get you going fast. Enable the example_bash_operator DAG in the home page.

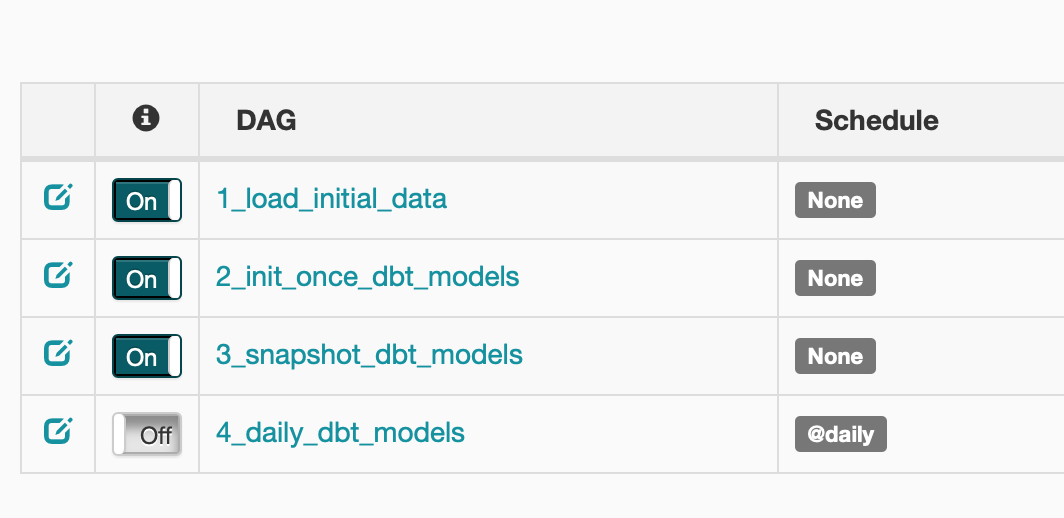

Visit localhost:8080 in your browser and log in with the admin account details shown in the terminal. This step of setting the environment variable should be done before installing Airflow so that the installation process knows where to store the necessary files. The AIRFLOW_HOME environment variable is used to inform Airflow of the desired location. Airflow usesĬonstraint files to enable reproducible installation, so using pip and constraint files is recommended.Īirflow requires a home directory, and uses ~/airflow by default, but you can set a different location if you prefer. The installation of Airflow is painless if you follow the instructions below. Them to appropriate format and workflow that your tool requires.

If you wish to install Airflow using those tools you should use the constraint files and convert Installing via Poetry or pip-tools is not currently supported. Pip - especially when it comes to constraint vs. Pip-tools, they do not share the same workflow as While there have been successes with using other tools like poetry or Only pip installation is currently officially supported. Starting with Airflow 2.3.0, Airflow is tested with Python 3.7, 3.8, 3.9, 3.10. Successful installation requires a Python 3 environment.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed